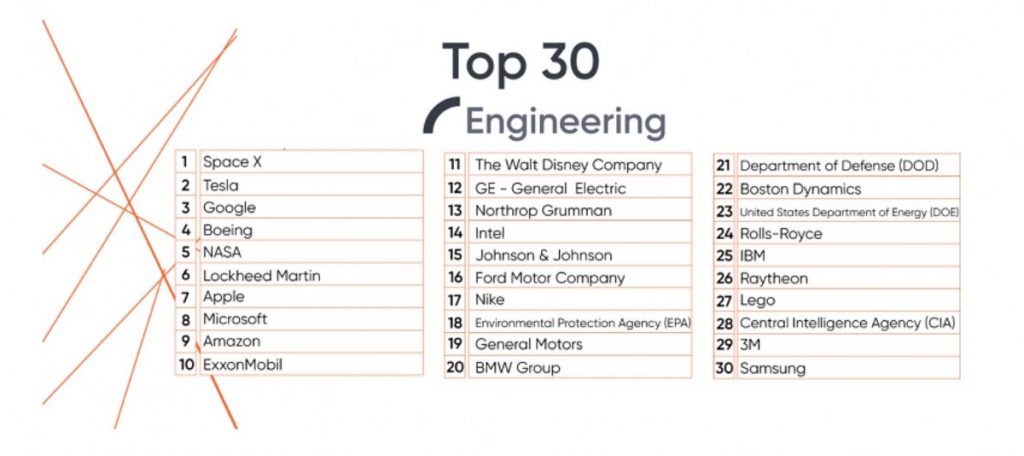

#Engineering #students have selected @elonmusk’s Space X and Tesla as the most attractive #employers in the #UnitedStates, according to @UniversumGlobal

Also, USEPA (18th) and DOE (23th) are ranked in the top 30. Energy and Environment are topics they wish to work on.

Top10:

@SpaceX @Tesla @Google @Boeing @NASA @LockheedMartin @Apple @Microsoft @amazon @exxonmobil

via https://www.teslarati.com/tesla-spacex-best-employers-2019-elon-musk