Title: Tunewave

Keywords: Electroencephalography(EEG), Music Composition, Neurofeedback, Music software, Music Memory, Electroencephalophone

Abstract

The Tunewave is a device capable of reading one’s brain waves as a means of interpreting the music being “played” in one’s head and converting it to a more permanent form which can be played back at any time in the future. Furthermore, it would be able to project the music in real time, allowing for live “performances,” as well as for musicians to collaborate improvisationally both online and in person.

In order to make the Tunewave a reality, an enormous amount of research must be conducted on a wide variety of topics and fields. We must first understand how the brain works when listening to, interpreting and performing music, as well as when one is engaging in creative activities. This would require a great amount of experimentation with EEG devices such as the Emotiv, which work to interpret brain activity. Electroencephalography itself would need to be researched extensively, including the science behind it’s function as well as it’s practical applications. In terms of said practical applications, research would also need to be conducted into how brain activity monitoring technology can be used to translate the brain activity into commands, and into what one is “hearing” in their mind’s ear.

Research: Tangible

Electroencephalography, at least in it’s realized form, dates back to 1924, when a German psychiatrist produced the world’s first recorded EEG. The brain is a network of neurons, which are individual cells which communicate with one another electrically via an ionic current. These electrical impulses are detectable on the scalp via EEG. As the electrical impulse between one single neuron and another is far too faint to pick up, EEG devices instead pick up the collective electrical activity of several thousand neurons grouped within their own networks, producing the recorded results.

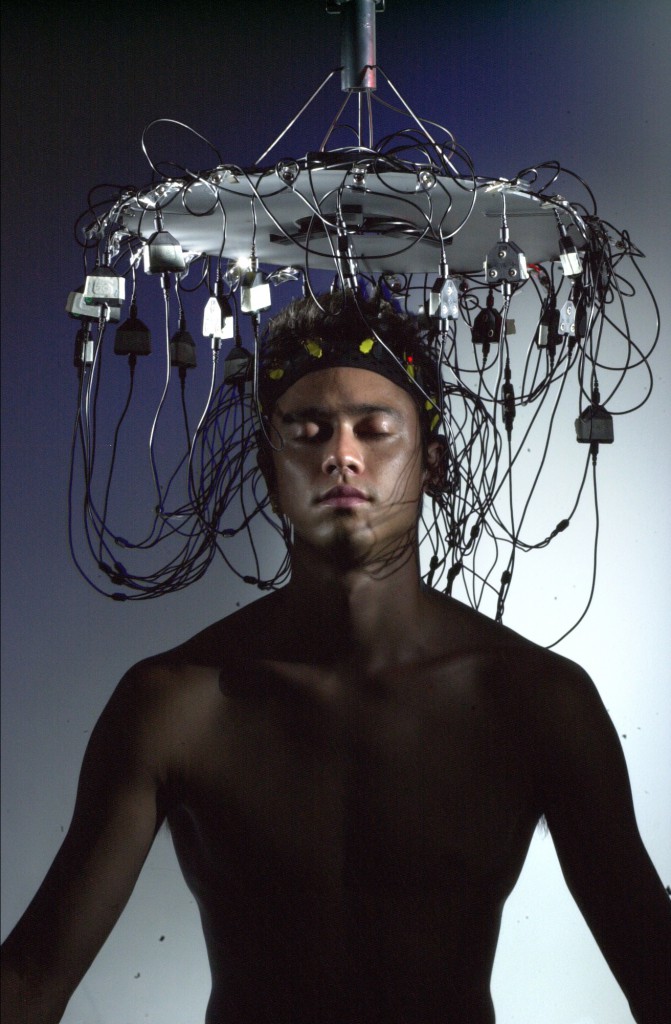

As research and information technology have progressed over the years, EEG devices have become far more complex. They work via upwards of 14 sensors positioned throughout the users scalp, each collecting data on electrical activity in different parts of the brain. Technology has progressed to the point of utility primarily in the fields of medicine and research. They’re most frequently used for monitoring and differentiating between different types of seizures, as well as detecting neural activity in patients with brain damage, and to monitor the affects of anesthesia.

Recent years have found further-reaching applications of EEG technology. As many people know, the language a computer “speaks” at it’s most basic level is that of binary code. From our perspective, the language our brains speak lies in the electrical communication between billions upon billions of neurons and neural networks. Linking these two concepts together gives us the concept of the brain-computer interface, or BCI.

Brain-computer interfaces may very well be the next technological frontier. Already we’re seeing iterations of the technology that only a few decades ago might have been seen as science fiction. In recent years, researchers have developed brain-computer interfaces which allow human and/or animal brains to operate a cursor on a screen, or even a robotic arm.

Institutions like Samsung’s Emerging Technology lab are already seeking to assimilate Brain-computer interfaces into our everyday lives; more specifically consumer electronics. In the lab, Samsung is currently testing tablets which, when used in conjunction with specially-designed ski-cap-style hats use one’s brain waves to operate commands. Many speculate that within the next decade, we will have mapped the human brain, and will be using BCI’s to do everything from turning off lights to operating our beloved gadgets.

The implications of these developments are massive, to say the least. To reiterate, brain-computer interfaces take information produced by the brain, interpret said information and then use it to accomplish tasks. While creating movement is one application, researchers are now able to create visual representations of activity in the neural cortex. As an example, in one experiment, cats hooked up with EEG were shown a series of short videos, who’s thalamus’s electrical activities were in turn interpreted by a computer, producing videos of what the brain has seen (picture below).

Clearly, sophisticated applications of EEG have begun to emerge. However, aural representations of electrical activity has yet to have been produced with such clarity. The furthest such applications have progressed are via devices called Electroencephalophones (one prototype pictured below) upon which I will elaborate futher in the following section.

Philosophical Research

While the concept of the electroencephalophone has been around since the 1940’s, the technology and devices bearing its name have yet to develop anywhere close to their full potential. In fact, it appears no device has gotten close to or near the level of complexity I am suggesting. In Pagan Kennedy’s New York Times article entitled “The Cyborg in Us All” (2011),Kennedy discusses current BCI research, among other things. He spends time with computer scientist Gerwin Schalk, who uses platinum electrode brain implants, called electrocorticographic (EcG) implants, to read and interpret brain activity. As most EEG devices only use the electrical information available on the scalp, the data received by devices implanted in one’s brain is far richer.

In the article, Schalk plays Pink Floyd’s “Another Brick in the Wall, Part 1” for a number of subjects outfitted with EcG implants. During moments of silence within the song, one would expect the brain activity in regards to the interpretation of the music to go silent. Surprisingly, this was not the case. In fact, the neural activity suggested that the subject’s brains were creating a model of where they thought the music would go. Schalk goes on to admit that this could mean we are not far off from creating actual music out of brain waves. That aside, Schalk is concerned mostly with using these electrodes in order to use neural data to produce words, allowing those who would otherwise not be able to speak instead communicate via recorded neural impulses. The US Army in fact gave Schalk a $6.3 million dollar grant to fund his research in hopes that he would produce a device which would allow soldiers to communicate via their brains.

This research has shown us that we in fact that the goals I am outlining here are not unattainable. In fact, while I have yet to come across any project seeking to create the device I am proposing, there are a number of past and ongoing projects which point in a similar direction. In 1971, Finnish designer, philosopher and artist Erkki Kurenniemi designed a synthesizer dubbed the DIMI-T. The deviced used a EEG device to monitor the alpha channel of a sleeping subject’s brainwaves as a means of controlling the pitch of the synthesizer. A More modern initiative called the Brainwave Music Lab seeks “to find interactive applications of brainwave music through various trial-and-error experiments.” While interesting and certainly relevant, the project by their own admission takes a quasi-scientific approach, which isn’t in line with the approach we must take in developing the Tunewave.

While the implications of a device like the Tunewave are certainly exciting, they do not come without certain ethical considerations. Music is fundamental facet of what makes us human. Professional and amateur musicians alike dedicate countless hours honing their craft. What if these skills became obsolete? Toyota began developing their “Partner Robots” in 2005. They are humanoid robots which, among other things, can play musical instruments like the trumpet and the violin- an instrument which requires a great amount of dexterity. Performing music is one of the most human things a human can do. With advents like the Tunewave, we must balance the greater accessibility of music creation against the dehumanization of art.

Full Project Description

The Tunewave will be an extremely multi-faceted project requiring an enourmous amount of research and trial-and-error. In order to create a clearer picture of what it’s development would entail, I’ll break the description down into sections, each describing a different component of the project. Each component is essentially an element of an interaction diagram of the device and it’s function: The brain(which requires no additional description within the scope of the project), the EEG Device, the computer, and the software. I will elaborate on each element below.

The EEG Device: It goes without saying that in order to utilize Electroencephalography, a device capable of reading the electrical impulses of brain activity must be involved. As previously mentioned however, current technology doesn’t allow for detailed enough readings without implanting electrodes in the brain, and even so, no device has yet been capable of achieving the level of detail required for this project. As such, the first stage of the project would be to develop EEG technology sensitive enough this level of detail via the scalp alone, without being too complex or unwieldy for the average person to use without significant and/or professional assistance. Emotiv Systems, the company behind the Emotive EEG device pictured below, is certainly on the right track.

Alternatively, if this device were created in a world where implants were the norm (it’s already being developed as a simple outpatient procedure) to be used in a variety of other applications, then this component of the device would be unnecessary. Unfortunately, that isn’t (yet) the world we live in.

The Computer: The EEG device would be connected via wires or wirelessly to a computer that must then interpret the data received. While this could just as easily be accomplished via one’s personal computer, the user should not have to rely on their laptop or desktop PC. Instead, as the computer’s job would only be to run one item of software, it would be a small device capable of being kept on one’s person. In the case that the computer is connected to one’s EEG device via a wired connection, this would also eliminate the hazard of a long wire which could be tripped accidentally and disrupt the performance. Needless to say, in order for the software to complete interpret such an enormous amount of information in real time, the computer would need to be extremely powerful, at least by today’s standards.

The Software: Perhaps the most complex element of the Tunewave would be the software responsible for interpreting the data received and producing an acceptable (read: amazing) result. This component of the device would have several components unto itself. It would first need to achieve a certain familiarity with the unique workings of the individual’s brain and how it functions. This would put the data received in context, making it easier to derive meaning. The following component would then interpret the data through a series of complex algorithms. From there, the final product of the data interpretation would then be projected via speakers and/or stored digitally for later playback.

Project Timeline

Surprisingly, I have managed to stick pretty close to my original project timeline. It is difficult to pin exact dates on my work, as the majority of it has involved research, the topics of which blended together. I started off by looking into what happens in the brain when music is heard and created. From there I looked into understanding how Electroencephalography works; this is the step I struggled most with. While I feel I have achieved a basic understanding, my understanding was still limited by my lack of knowledge in the field of neurology. In addition, it was difficult to find out how devices like the Emotiv work beyond the most basic level. This is understandable as I would assume they need to protect their products. After spending some time compiling my research, I began allocating my findings to my deliverables and figuring out how best to present them. I also tweaked my deliverable ideas, which I will explain below.

Description of Deliverables

The first deliverable will obviously be the journal. I am still working on the drawings of what the device will look like in its final form; my drawing skills are a little more rusty than they thought they were, and I am not familiar enough with Adobe Illustrator to create a design with the detail I would have liked. I ran into a slight roadblock with the interaction diagrams; I actually found that there is an entire coding language surrounding them. I instead have made “interaction diagrams” in a more colloquial sense. As I had planned, first depicts how the different components of the device relate to and communicate with one another, while the other interaction diagrams depict how a user will interact with the device and it’s various components in the context of a number of hypothetical scenarios. I am working on a diagram of the regions of the brain, their functions, and the regions that will be monitored by the device with corresponding explanations. This I have managed to accomplish digitally (at least so far). Rather than having the final deliverable be a user guide itself, I have decided instead to make it a presentation which incorporates all the other deliverables I have listed into a user guide. As I’m still completing the other deliverables I have not yet decided how the presentation will work, although I’m leaning more towards Prezi than I am towards Powerpoint.

Going forward into the future, I would like the Tunewave to have more of a social focus. Naturally, it would take some practice to be able to use efficiently, if not creatively. The user would need to learn how to use the device, and the device would need to “learn” how the user’s brain works. As such, I would like for there to be a component of the device which connects to the internet and has a series of “lessons” which would form a two-way communication between the device and the server. The server would receive the user’s brainwave data and use it to guide the user as to how to more effectively use the device. The interconnectivity would have a plethora of other functions. The user would have the option to join a set of friends to create music together, or to be paired randomly with other users of similar skill. Collaborative, open-source pieces would be possible, including one’s playing in a virtually infinite stream; a piece which never ends, made up entirely of music and sounds contributed by users in real time.