Project Title: Dream Catcher

Keywords: Dramreader, Dream Device, MRI, Electrowaves, Dream technology, Brain waves, Brain waves reader, fMRI

Project Description:

DeamCatcher is a APP that would serve to scan, collect, decode, encode and record the signals from the brain while dreaming using the principle of fMRI [Functional magnetic resonance imaging] with difference is that you will not need to put anything on your head or pillow.

The data will be saved in a iCloud and you can download it with one click and read it on the go, anytime and anywhere.

There will be two optional views: text and slides. You will have the option to put together the slides and present it in a form of Slideshow or a Movie.

The way is set up is just like you set your alarm. You will input date and time from which the recording of the brain data will start and date and time to when the brain recording data will end.

In order to sign in you will need to have a Mental Password. It can be any image that you like and you have to use it every time. The purpose of that is that obviously no one has access to your dream data but also that way you will not be able to read peoples mind. If the app can read your brain data then it can read anybodies data therefore you will need a permission to do that and that is the password.

The DreamCatcher APP

Flowchart of the Process

Research:

UC Berkeley

Currently researchers at UC Berkeley have figured out how to extract what you’re picturing inside your head, and they can play it back on video.

http://www.dvice.com/archives/2011/09/brain-imaging-c.php

Professor Jack Gallant’s and his team took the research even further. They focused their work on computational modeling of the visual system. They study the visual system because is more approachable than the cognitive system (that mediate complex thoughts).

Reconstructing movies using brain scans has been challenging because the blood flow signals measured using fMRI change much more slowly than the neural signals that encode dynamic information in movies. For this reason, most previous attempts to decode brain activity have focused on static images.

The human visual system consists of a hierarchically organized, highly interconnected network of several dozen distinct areas. Each area can be viewed as a computational module that represents different aspects of the visual scene. Some areas process the simple structural features of a scene, such as the edge orientation, local motion and texture. Others process complex semantic features, such as faces, animals and places. The laboratory focuses on discovering the way each of these areas represents the visual world, and on how these multiple representations are modulated by attention, learning and memory. Because the human visual system is exquisitely adapted to process natural images and movies we focus most of our effort on natural stimuli.

One way to think about visual processing is in terms of neural coding. Each visual area encodes certain information about a visual scene, and that information must be decoded by downstream areas.

Both encoding and decoding processes can, in theory, be described by an appropriate computational model of the stimulus-response mapping function of each area. Therefore, the descriptions of visual function are posed in terms of quantitative computational encoding models that can be used to read out brain activity, in order to classify, identify or reconstruct mental events. In the popular press this is often called “brain reading”.

Most of the successful stories on reading brain activities and decoding visual information involve functional magnetic resonance imaging (fMRI), a rapidly developing technique for making non-invasive measurements of brain activity. Because the accuracy of encoding and decoding models will inevitably depend on the quality of brain activity measurements.

Previously, Gallant and fellow researchers recorded brain activity in the visual cortex while a subject viewed black-and-white photographs. They then built a computational model that enabled them to predict with overwhelming accuracy which picture the subject was looking at.

In their latest experiment, researchers say they have solved a much more difficult problem by actually decoding brain signals generated by moving pictures.

They watched two separate sets of Hollywood movie trailers, while fMRI was used to measure blood flow through the visual cortex, the part of the brain that processes visual information. On the computer, the brain was divided into small, three-dimensional cubes known as volumetric pixels, or “voxels.”

The brain activity recorded while subjects viewed the first set of clips was fed into a computer program that learned, second by second, to associate visual patterns in the movie with the corresponding brain activity.

Brain activity evoked by the second set of clips was used to test the movie reconstruction algorithm. This was done by feeding 18 million seconds of random YouTube videos into the computer program so that it could predict the brain activity that each film clip would most likely evoke in each subject.

Finally, the 100 clips that the computer program decided were most similar to the clip that the subject had probably seen were merged to produce a blurry yet continuous reconstruction of the original movie.

https://sites.google.com/site/gallantlabucb/publications/nishimoto-et-al-2011

http://newscenter.berkeley.edu/2011/09/22/brain-movies/

ATR Computational Neuroscience Laboratories

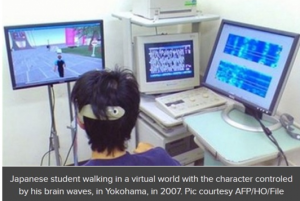

Similar stud has been conducted by many scientific labs one of which is, ATR Computational Neuroscience Laboratories in Japan has only managed to reproduce simple images from the brain, but, “by applying this technology, it may become possible to record and replay subjective images that people perceive like dreams,” the private institute said in a statement.

The technology works by capturing the electrical signals that are sent from the eye’s retina to the brain’s visual cortex. For the experiment the team first figured out people’s individual brain patterns by showing them some 400 different still images and then showed the people the six letters in the word “neuron”. They then succeeded in reconstructing the letters on a computer screen by measuring their brain activity. The researchers revealed the breakthrough in a study unveiled ahead of publication in the US magazine Neuron. Exciting technology to be sure, but if my experiences in listening to other people recount their dreams are anything to go by, tuning into other people’s dreams is likely to be a baffling experience.

The next step for researchers will be to study how to visualize images inside people’s minds that have not been presented before – a technology that could make it possible to record people’s dreams.

Youtube link: https://www.youtube.com/watch?v=MElU0UW0V3Q

Benefits:

It will be beneficial to the exploration of ourselves and to may be reality that we never knew existed or to our past existences.

By exploring to power of our brain and pushing the limits to find new technologies, we will learn new ways of communication without buttons and hard drives but with our brain: a telepathy-communication or telepathetaion.

Other benefit is that the discovery will give us a better understanding of what goes on in the minds of people who cannot communicate verbally, such as stroke victims, coma patients and people with neuro-degenerative diseases.

It may also lay the groundwork for brain-machine interface so that people with cerebral palsy or paralysis, for example, can guide computers with their minds.

Timeline:

- 6 Journal Entries for the project progress

- Weekly check for new technologies that are related to the project.

- Pre-Proposal submission

- Revision of the paper including any additional notes from classmates and teacher.

- 3 minutes Presentation of the Midterm paper

- Rewriting according to any additional notes and information

- Final Project presentation

Deliverables:

- 6 Journal Entries

- Chart on how the brain operates and where the information will be collected from

- Chart on how the device will work and record dreams

- Visual of the APP

- How the APP will be set up to work

- Verbal and Visual presentation

- Slideshow presentation

communicating from within dreams using eye motions

Note: Each brain is initially mapped, and that map functions as a stored “diagram” to work off in perpetuity— brain does not have to have a device attached to it ever again.